Sunday, May 20, 2007

Moving

http://beyondthecode.wordpress.comAll future posts can be found there.

Wednesday, March 21, 2007

The Nebulous Notion of "Computer Literacy"

Techdirt stumbled onto a problem I think is worthy of note the other day.

Apparently studies have shown that a lack of good IT literacy can have someone waste up to 40 minutes per day. Of course, the real question is how to solve this problem. As the article notes, the answer is in better training for employees -- but it's not yet clear what kind of training is really needed. Perhaps part of the problem is that it's different elements of basic IT skills that are tripping up people and there isn't really one silver bullet for solving all those problems.

I think this presents an interesting paradigm shift that we'll have to make in our day to day language and interaction in the near future. Namely, the question of "are you computer literate" no longer carries any real meaning.

Because computers can be used to do a thousand things, asking if one knows how to use a computer has become akin to asking if one knows how to use an education. To give a meaningful response, the counter question needs to immediately be asked, " well, to do what?"

Several sectors of society have already shifted towards in this direction, asking for competence in specific software packages instead of general literacy. After all, in house a company tends to devote its entire IT layout to Microsoft or Lotus or some other all inclusive package. Without knowledge in that application, an employee would require nearly as much training as one who knew nothing about computers at all.

However the more important shift is a cultural one, one that will move the question of computer competency into the background instead of the foreground. I suppose this will move hand in hand with the gradual shift towards a more ubiquitous computing experience. I know I look forward to the day where computers fade nearly entirely into the background, allowing for free form creativity and action from it's users.

technorati tags:literacy, computing, ubiquitouscomputing

Tuesday, March 20, 2007

Where's the Counterpoint?

There has been a lot of media attention paid to the dangers of social networking websites like MySpace and others can create for our children. Such stories often star shady abductors misspelling words and carefully luring children into their web each night, preying on their naivety and innocence. However, as one might expect if one looked at any national or state statistics, abductions have not risen dramatically since the rise of these websites. Nothing terribly significant has occurred since then.

As I watch the one-sided news coverage I find myself asking a question, why aren't their any voices present in the mass media countering this argument? It seems like every good point should have a counterpoint like every story should have (at least) two sides. However, on this issue, the voice of reason seems to be especially lacking in popular media.

It's not lacking elsewhere however. Adam Thierer at The Technology Liberation Front has a new study out, and his most recent post on the subject contains the following counterpoint among others:

A final myth about social networking that I discuss in my paper is that teens are at a much greater risk in these online communities than they were in the offline social communities of the past. It’s clear that part of what is driving the push to regulate social networking sites is that many adults simply don’t understand this new technology and have created a sort of “moral panic” around it. But parents misunderstanding teens—or a new trend or technology that teens love—is really nothing new. For example, today’s grandparents will recall that when they were teenagers in the 1950s and 1960s, their parents worried about their hanging out at burger joints and roller rinks. And today’s parents will remember that in the 1970s and 1980s, their parents were concerned about their hanging around shopping malls and video arcades. Those places were the social networking sites of their eras. And so it continues with the networking sites that today’s youngsters enjoy: digital, interactive websites.

It's an argument that eloquently and politely debunks the hysteria surrounding social networking sites. Hearing it's reason makes me wonder twice as hard as to why this sort of thing isn't heard from the popular media on the heels of each scare story.

If the goal of the news networks is to scare people, I can understand it's absence. However, I think the point Thierer raises here is both simple and engaging. It gets people to think about times from their past (most likely positive ones) and settles their minds a little bit about the future. Thus his argument should have an appeal to most Americans, qualifying it as mainstream material.

Yet it's absence persists. So why isn't it on the nightly news next to the scare stories? Is it too hopeful for society to be more stable than we thought? Is it too complacent with criminals we should obviously find evil and detestable? Is it too reasonable to ask the public to use its intellect instead of its base emotional response?

I don't know the answer, but having taken no journalism or media courses, it's interesting to try and guess from an economic standpoint exactly what influences demand to reach this equilibrium. Does the public desire to be scared and nothing else? Are the network executives keeping the scare stories unchallenged for a reason? Is there greater profit in scaring America rather than informing America? Is there another influence I don't see?

Granted there is challenge to the mainstream ideas in a few public arenas, like some blogs and the handful of debate shows on television, and while I believe those sources are vital to the public discourse, I think their influence simply isn't enough to balance out the presentation in the mass media. So I look to the Nightly News and ask myself again...

Where's the counterpoint?

Friday, March 16, 2007

The ATHF Witch Trials

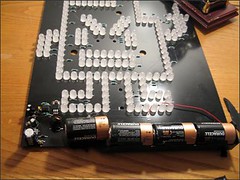

Let's begin with a picture.

Now for some background.

Recently, a publicity stunt by a late night Cartoon Network show, Aqua Teen Hunger Force, caused a big scare in Boston. So reports The Boston Channel:

BOSTON -- Turner Broadcasting plans to take responsibility for the "hoax devices" that were found at sever allocations in and around Boston Wednesday that forced police bomb units to scramble throughout the area.

The incidents were part of a marketing campaign that involved a character from the cartoon show "Aqua Teen Hunger Force.""The 'packages' in question are magnetic lights that pose no danger. They are part of an outdoor marketing campaign in 10 cities in support of Adult Swim's animated television show 'Aqua Teen Hunger Force,'" Turner Broadcasting, the parent company of Cartoon Network, said in a statement.

The company said that they have been in place for two to three weeks in Boston, New York, Los Angeles, Chicago, Atlanta, Seattle, Portland,Austin, San Francisco and Philadelphia.Turner Broadcasting is in contact with local and federal law enforcement on the exact location of the billboards, according to the statement, and regrets that they were mistakenly thought to pose any danger. The cartoon airs as part of the Adult Swim late-night block of programs on the Cartoon Network.It features characters called "mooninites," who were pictured on the found devices. A feature length film based on the cartoon is scheduled to be released late next month.Gov. Deval Patrick praised the response of law enforcement and said that he was "dismayed to learn that many of the devices are a part of a marketing campaign by Turner Broadcasting.""This stunt has caused considerable disruption and anxiety in our community. I understand that Turner Broadcasting has purported to apologize for this. I intend nonetheless to consult with the attorney general and other advisors about what recourse we may have," Patrick said.

Boing Boing picked up the story and ran with it, because the case highlights the conflict between fighting terror in America and preserving freedom of speech. Why did people perceive this image as a terrorist threat? Why didn't they shake their heads and move on? Was it the finger? Was it the blinking lights? Was it the size of the objects? I wonder.

Techdirt also weighed in, noting that if your only anti-terrorism tool is a bomb squad, suspicious objects start to look more and more explosive.

While I don't think that the Boston police department believed in a ridiculous scenario like this video, I do think people overreacted to what they saw as merely unfamiliar and offensive.

Thus leading to my concern for free speech. Having seen several episodes of the show, I wouldn't have been surprised in walking past that picture, but another person may have thought differently. According to Boing Boing, the law being used in this scenario focuses solely on the intent of the devices "to cause anxiety, unrest, fear or personal discomfort to any person or group of persons."

This seems like a pretty flexible piece of legislation, especially when the intent of the creators gets grossly misconstrued in its transmission to the public. The situation becomes especially tenuous when its the judges who, after the fact, "shall, after a conviction, conduct a hearing to ascertain the extent of costs incurred, damages and financial loss suffered by local, county or state public safety agencies and the amount of property damage caused as a result of the violation of this section." What check is there to keep people from claiming that everything was intended to be a bomb, turning the streets into a scene similar to the Salem Witch Trials (as has been argued by others)?

So in the end, the thing I'd like to see is a calmer, more rational public with regards to terror. It seems like a contradiction in terms, not to mention an impossible feat given the daily amount of terror based news we're supplied with. However, unless we want to start being scanned everywhere we go, having every accessory checked by some Big Brother at every turn, then we better relax and start realistically counting the odds of a terror attack. Otherwise we're in danger of hastily letting go of some of our most treasured liberties.

Monday, January 08, 2007

College Recruitment and Web 2.0

Students want to feel like they are being incorporated into the campus

community, and many of them therefore wanted personal contact with

faculty and already-enrolled students, not just with admissions

counselors. 83 percent of the high-schoolers surveyed said that they

would read a blog written by a faculty member, while 63 percent would

read one written by a current student, and 57 percent would like to

create a personalized profile page about themselves on the school's

website.

While I agree with a number of the suggestions, especially the student and faculty blogs, if I were an administrator I would proceed with extreme caution. Not just because Web 2.0 is new and scary and different, but because of the nature of Web 2.0 itself.

While the age of user-created content is undoubtedly a good thing, utilizing it in a college recruitment setting takes power from the universities, something I doubt they would approve of. Imagine, if you were in charge of your school's image and didn't have the reputation of Harvard, Yale, or Duke, would you really want to entrust that image to the masses? Especially when talking about individualized web pages on a school's server, things could get unruly very very fast.

Especially since the data shows that students still want to receive information via snail mail, I don't believe that this survey reflects a growing desire for technological use in the recruitment process. Instead, I think that this shows students want to exploit every opportunity to get into their school of choice be they traditional or high-tech opportunities. Raising the bar to apply for a school would presumably weed out more students, giving the ones who really want to get in a better chance.

To sum up, I do believe more communication between the faculty/students and the prospective students has the potential to be a good thing, but it involves an inherent loss of control on the university's part, so we won't see drastic shifts soon, but probably witness a few new ideas come into play each year. Also, I think the survey would've been the same if students were offered simply more options instead of more Web 2.0 focused options.

Thursday, December 28, 2006

Shaping P2P Traffic

The funny thing, though, is that whether or not it really is a burden,

the idea of using traffic shaping is absolutely going to backfire. As

we've already discussed, the more ISPs try to snoop on or "shape" your

internet usage, the more that's going to be a great selling point for encryption.

People are going to increasingly encrypt all of their internet usage,

from regular surfing, to file sharing to VoIP -- as it makes it that

much more difficult to figure out what kind of traffic is what and to

do anything with it.

A few things that they didn't explicitly point out I find to be particularly important.

- The power of the individual is sticky

It's tough when you're offering a platform like an internet connection. While users constantly search for new applications and uses of their connection and bandwidth, the telcos want things things to stay the same as much as possible in order to provide only what is needed from a willingness to pay perspective, thus earning a predictable, reasonable rate of return on their investments. However when the internet gets involved, that dichotomy is thrown out of balance, because the technology evolves at a breakneck pace, keeping the space virtually unpredictable. - A rising level of human capital will be less likely to tolerate this activity in the future

As reported by Ars Technica, A new poll by Zogby International and 463 Communications showed that most (83%) of the respondents believed that the average 12-year-old knows more about the Internet than do members of Congress. I believe this is more than a matter of perception, and it will spell trouble for the profits of telcos down the line unless they can learn to adapt more quickly to their market. More educated consumers will begin to demand more from their internet connections and will either switch providers, or find ways to make it retain their previous level of functionality via encryption or some other method. This isn't necessarily bad, but it does mean that the present tension from bandwidth hungry consumers is only likely to increase in the future.

Thursday, December 14, 2006

Leave it to the Government

Soaring metals prices mean that the value of the

metal in pennies and nickels exceeds the face value of the coins. Based

on current metals prices, the value of the metal in a nickel is now

6.99 cents, while the penny's metal is worth 1.12 cents, according to

the U.S. Mint.That has piqued concern among government

officials that people will melt the coins to sell the metal, leading to

potential shortages of pennies and nickels.

I don't see this as a sign of a failing economy, but I do see this as an interesting quirk when it comes to money. Because (historically at least) is tangible, it has to be made out of one type of material or another. As inflation rises steadily through growth of the money supply, eventually those materials could become more valuable in raw form than as they money they're coined into.

However, I fully support the government's move here for a very simple reason. Melting down currency of any kind directly changes an the money supply in an economy. Quite frankly, I'm not willing to let individuals, foreign or domestic, be in charge of the money supply of my primary currency.

On a personal level, the value of the money I hold may increase due to falling money supply, but depreciation often is indicative of more harm than good. Additionally, increased volatility in the value of our currency would scare investors away, weakening the economy as a whole.

To briefly hit on a subject too large for one post, this activity serves to bring the currency back to the gold standard, or in this case the copper standard. After a while, the prices of pennies and nickels would re-establish themselves at a penny and a nickel, restoring "convertibility" from the penny to its metal. This would only work in one direction though, because individuals couldn't convert metal back into currency.

It's an interesting topic, with many far reaching ramifications. However, since most of them only serve to destabilize the economy the only suitable option is to leave the money supply in the government's hands. It's better for everyone that way.

Technorati Tags: Money, money supply